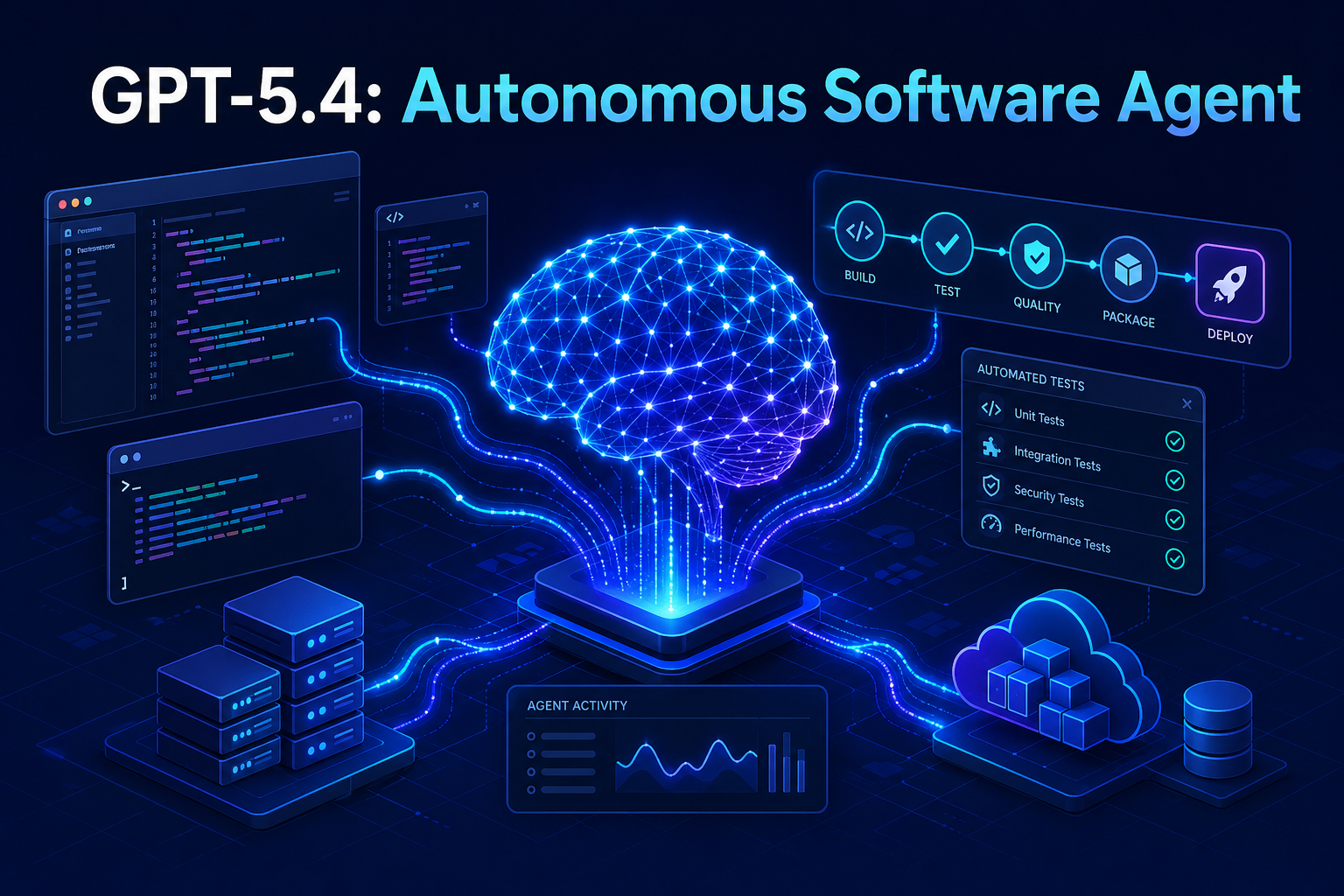

What if your AI assistant could read a Jira ticket, clone the repo, write the patch, run the tests, open a pull request, and ping the reviewer — without you typing a single command? That is the pitch behind GPT-5.4, the model OpenAI announced this week, and it is the first release the company is positioning less as a chatbot and more as a true software agent.

OpenAI claims GPT-5.4 can autonomously execute multi-step software workflows that previously required a human in the loop at every junction. For developers, that is either an enormous productivity unlock or a fresh source of anxiety, depending on how you read the demos. This guide walks through what GPT-5.4 actually is, what it can do today, where it still falls short, and how you can start integrating agentic workflows into real engineering work.

What Is GPT-5.4 and Why Does It Matter?

GPT-5.4 is OpenAI’s latest large language model, optimized for long-horizon, multi-step task execution rather than single-turn conversation. Unlike earlier GPT releases that focused on raw reasoning quality, GPT-5.4 ships with native tool-calling, persistent task memory, and a planner-executor architecture that can decompose a vague engineering request into a concrete sequence of file reads, code edits, shell commands, and verification steps.

That distinction matters. Previous models could draft a function if you asked nicely. GPT-5.4 is built to finish the ticket — including the parts a junior engineer would normally forget, like updating the changelog, regenerating the OpenAPI spec, and re-running the linter after a rebase.

The shift from “AI that writes code” to “AI that ships code” is the headline. Whether that headline holds up under production load is the open question every engineering leader is now asking.

Key Capabilities of the GPT-5.4 Autonomous Software Agent

OpenAI’s announcement post and accompanying technical report describe several capabilities that distinguish GPT-5.4 from prior agentic systems like AutoGPT-style scaffolds built on GPT-4 or GPT-5.

- Long-horizon task planning: Sustained reasoning across sessions of up to 8 hours of agent runtime, with checkpointed state.

- Native tool orchestration: Built-in connectors for shell, file system, web search, browser automation, and the OpenAI

code_interpreter, with parallel tool calls. - Self-verification loops: The model writes tests, runs them, reads the failures, and patches its own code before declaring a task done.

- Structured task memory: A persistent scratchpad the agent can edit, query, and prune, modeled on how senior engineers keep design notes.

- Permission-aware execution: A capability system that requires the agent to request elevated privileges (e.g.,

git push, deploys) rather than assume them.

None of these are novel in isolation — open-source projects like LangGraph, AutoGen, and Devin have explored each idea. What is new is the integration: a single model trained end-to-end on agent traces rather than stitched together with prompt engineering.

How GPT-5.4 Executes Multi-Step Software Workflows

Under the hood, GPT-5.4 follows a planner-executor-critic loop. The model first reads the task, then writes an explicit plan to a scratchpad, then executes steps one at a time while a critic pass evaluates progress after each step. If the critic flags drift, the planner is invoked again to revise the remaining steps.

The Workflow Lifecycle

- Ingestion: The agent reads the task description, attached files, repository state, and any linked issues.

- Planning: It produces a numbered plan with explicit success criteria for each step.

- Execution: Tools are called sequentially or in parallel. Output is written to the scratchpad.

- Verification: Tests run, lints pass, and the critic compares results against the success criteria.

- Reporting: A summary, diff, and confidence score are returned to the user, who approves or rejects.

A Minimal API Example

The new responses endpoint accepts a single high-level instruction and a list of allowed tools. Here is a stripped-down Python example that asks GPT-5.4 to refactor a utility module and open a pull request.

from openai import OpenAI

client = OpenAI()

# Kick off an agentic task. The model decides which tools to call.

response = client.responses.create(

model="gpt-5.4",

instructions=(

"Refactor src/utils/date_helpers.py to use datetime.fromisoformat "

"instead of strptime. Update tests. Open a PR titled 'refactor: "

"modern date parsing' against the main branch."

),

tools=[

{"type": "shell"},

{"type": "file_system", "root": "/workspace/repo"},

{"type": "github", "repo": "acme/billing-service"},

],

max_tool_calls=50, # safety cap on autonomy

require_approval=["push"] # human approval required before push

)

print(response.output_text)

print("PR URL:", response.metadata.get("pr_url"))

Notice three things. First, there is no manual orchestration loop — the SDK handles the planner-executor cycle internally. Second, max_tool_calls caps how long the agent can run before it must report back. Third, require_approval lets you carve out specific actions (like pushing to a remote) that always need a human signature, no matter how confident the model is.

GPT-5.4 vs. Previous OpenAI Models

The jump from GPT-5 to GPT-5.4 is not primarily about raw intelligence. It is about autonomy budget — how far the model can run before drift, hallucination, or a missed dependency derails it. The table below summarizes the practical differences.

| Capability | GPT-4 Turbo | GPT-5 | GPT-5.4 |

|---|---|---|---|

| Context window | 128K tokens | 400K tokens | 1M tokens |

| Sustained agent runtime | ~10 minutes | ~1 hour | ~8 hours |

| Native parallel tool calls | No | Limited | Yes |

| Self-verification loop | Manual scaffolding | Manual scaffolding | Built-in |

| SWE-bench Verified score | ~28% | ~62% | ~78% (claimed) |

Treat the SWE-bench number with healthy skepticism — vendor benchmarks have a history of regressing on independent reruns. Still, even a 70% score on real GitHub issues would be a meaningful jump over the state of the art six months ago.

Practical Use Cases for Agentic Software Workflows

Where does an autonomous coding agent actually earn its keep? After a week of community demos, a few patterns are emerging.

Bulk Refactors and Migrations

Migrating a codebase from moment.js to date-fns, upgrading from Python 3.9 to 3.13, or porting a service from REST to gRPC are tedious but well-defined. GPT-5.4 can grind through hundreds of files, run the test suite after each change, and isolate the few cases that need human judgment.

Issue Triage and First-Pass Fixes

Pointing GPT-5.4 at a backlog of “good first issue” tickets and letting it draft PRs overnight is the most common pattern early adopters report. Reviewers still need to verify, but the agent eliminates the cold-start cost.

End-to-End Test Authoring

Given a feature spec and a running staging environment, the agent can write Playwright or Cypress tests that exercise the happy path and several edge cases, then iterate until they pass deterministically.

Documentation Generation

Reading the source and producing accurate, runnable docs — including code samples that the agent has actually executed — is a sweet spot. See the official OpenAI API documentation for examples of structured doc generation pipelines.

Setting Up Your First GPT-5.4 Agent Workflow

If you want to experiment, the friction is lower than you might think. You need an OpenAI API key with GPT-5.4 access, a sandboxed working directory, and a clear scoping policy. Here is a minimal Node.js scaffold.

import OpenAI from "openai";

const client = new OpenAI();

async function runAgent(task) {

const run = await client.responses.create({

model: "gpt-5.4",

instructions: task,

tools: [

{ type: "shell", sandbox: "docker" }, // run commands in isolation

{ type: "file_system", root: "./sandbox" },

{ type: "web_search" },

],

max_tool_calls: 30,

on_step: (step) => {

// Stream every tool call so a human can watch in real time

console.log(`[${step.tool}] ${step.summary}`);

},

});

return run.output_text;

}

const result = await runAgent(

"Audit package.json for dependencies with known CVEs and " +

"produce a Markdown report with severity, fix version, and changelog link."

);

console.log(result);

The sandbox: "docker" flag is the single most important line in this snippet. Never let an autonomous agent run shell commands directly on your host. Containerize the workspace, mount only what the task needs, and revoke network access for tasks that do not require it.

Security and Safety Considerations

An agent that can execute arbitrary code is also an agent that can execute arbitrary malicious code if you prompt-inject it. The risk is not theoretical — any document the agent reads (a README, an issue comment, a webpage) can contain instructions designed to subvert it.

- Sandbox everything: Run agent shell sessions inside ephemeral containers with no access to credentials beyond the current task.

- Use scoped tokens: A GitHub token for an agent should be limited to one repo and expire in hours, not weeks.

- Require approval for irreversible actions: Pushes, deploys, database writes, and outbound emails should always pause for a human.

- Log every tool call: A complete audit trail is non-negotiable. If something goes wrong, you need to reconstruct what the agent did and why.

- Pin model versions: A silent upgrade can change agent behavior overnight. Pin

gpt-5.4-2026-04-15or whatever specific snapshot you tested against.

For a deeper treatment of agentic security risks, the OWASP Top 10 for LLM Applications is the most thorough public framework available.

Common Pitfalls When Adopting GPT-5.4

The early adopter community is already documenting predictable failure modes. Avoid these traps when you start your own pilot.

Treating the Agent as Infallible

A 78% SWE-bench score also means a 22% failure rate. On a real backlog, that translates to one in five PRs being subtly wrong. Code review does not become optional — it becomes more important, because the volume goes up.

Skipping the Scoping Step

Agents perform dramatically better on narrow, well-specified tasks than on vague ones. “Make the API faster” is a recipe for hallucinated optimizations. “Reduce p99 latency on /checkout below 300ms by adding a Redis cache for product lookups” is a task an agent can actually finish.

Ignoring Cost Controls

An 8-hour agent run with parallel tool calls can burn through hundreds of dollars in tokens. Set hard budget caps in your OpenAI dashboard and use max_tool_calls liberally during development.

Giving the Agent Production Credentials

Repeat after me: agents do not need production database passwords. Ever. If a workflow seems to require them, redesign the workflow.

The Road Ahead for Autonomous Coding Agents

GPT-5.4 is not the end of the story — it is the moment agentic coding stopped being a research curiosity and started being a product category. Expect the next twelve months to bring fierce competition from Anthropic, Google DeepMind, and a wave of specialized vertical agents focused on security review, infrastructure-as-code, or data engineering.

The honest forecast is messy: enormous productivity gains in narrow domains, painful failures in others, and a steady reshaping of what “writing software” actually means day to day. The teams that win will be the ones who treat the agent as a junior collaborator with superpowers and blind spots, not as a magic box. For background on how agentic systems differ from chatbots, the Wikipedia overview of software agents is a useful primer.

Frequently Asked Questions About GPT-5.4

Is GPT-5.4 available to all OpenAI API users?

At launch, GPT-5.4 is available in a tiered rollout. Tier 3 and above API customers have immediate access; lower tiers are gated behind a waitlist. ChatGPT Pro and Enterprise users get the agentic features inside the chat product within the same week.

How does GPT-5.4 differ from Anthropic’s Claude or Google’s Gemini agents?

All three vendors are converging on similar capabilities, but the implementations differ. GPT-5.4 emphasizes a single trained model with built-in tool use. Claude tends to favor smaller, composable agent loops. Gemini integrates tightly with Google Cloud and Workspace. The right choice depends on which ecosystem your stack already lives in.

Can GPT-5.4 replace human developers?

No, and OpenAI has been careful not to claim that. It can replace specific repetitive tasks — boilerplate generation, dependency updates, basic bug fixes — and it can dramatically speed up exploratory work. Architecture, judgment, code review, and product thinking remain human responsibilities.

What programming languages does GPT-5.4 support best?

Performance is strongest in Python, TypeScript, JavaScript, Go, and Rust, in roughly that order. Less common languages like Elixir, Crystal, or Zig still work but show higher hallucination rates on niche library APIs.

How do I prevent the agent from going off the rails?

Three guardrails handle most of the risk: a sandboxed execution environment, a hard max_tool_calls cap, and an approval gate for any action that touches the outside world. Combine those with structured logging and you can give the agent meaningful autonomy without losing oversight.

Does using GPT-5.4 expose my proprietary code to OpenAI?

API requests are not used to train OpenAI models by default, and Enterprise customers get additional zero-retention guarantees. Still, sending source code over any third-party API is a policy decision your security team should sign off on, ideally with a documented threat model.

Conclusion

GPT-5.4 marks a real inflection point for autonomous software workflows. The combination of a million-token context window, native tool orchestration, built-in self-verification, and an 8-hour autonomy budget moves agentic coding from “interesting demo” to “viable production tool” — provided you wrap it in the right guardrails.

The teams getting value from GPT-5.4 today share a pattern: they scope tasks tightly, sandbox aggressively, require human approval for irreversible actions, and treat the agent’s output as a first draft rather than a final answer. Adopt that discipline and you can capture most of the upside without inheriting the failure modes that have plagued earlier autonomous coding experiments.

Start small. Pick one repetitive workflow on your team — dependency upgrades, changelog generation, flaky test triage — and build a single GPT-5.4 agent around it. Measure the time saved, the error rate, and the review burden honestly. If the numbers work, expand. If they do not, you will have learned exactly where the model’s current limits sit, which is information that will be worth more than the latest benchmark score the next time a new GPT version drops.